Online to Offline: Artificial Intelligence helps to design a personalized museum in digital era

April 14, 2019 - All

Online to Offline: Artificial Intelligence helps to design a personalized museum in the digital era

How do you visit the museum? As an explorer or experience seeker, you probably would love to adventure museum like Tracy Chevalier, the author of Girl with a Pearl Earning, who always does her secret curation in a museum or gallery and chooses a painting to tell a story about it. That is to say, basically, she doesn’t have to prepare anything before the trip. Just walk in and bond herself with the artifact she picks. The story helps to personalize her museum experience and sustain her memory for quite a long time. You can do that, too. But most of us will still rely on “1001 Paintings You Must See Before You Die”, “111 Works of Art You Must Not Miss” or some museum guides or maps, to craft a plan and try to make the most use of it. But all the information is others’ recommendation and their prior experiences or stories. It will take a bit long for you to change others’ museum to yours. And I am not sure whether people will still try next time if they have to take the same efforts with their busy schedule nowadays.

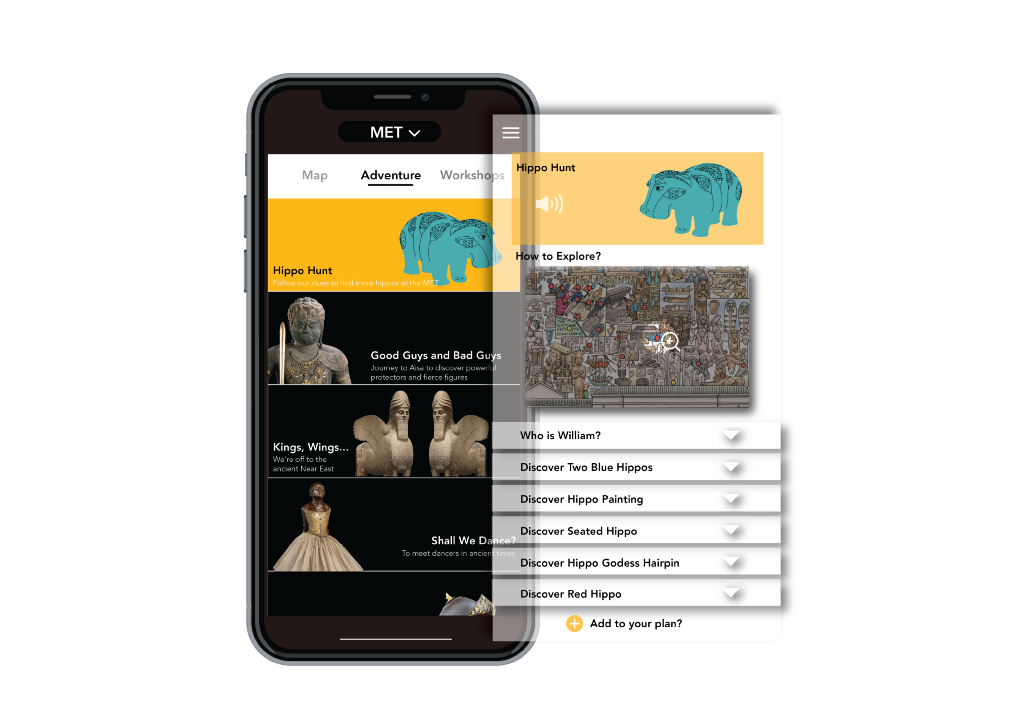

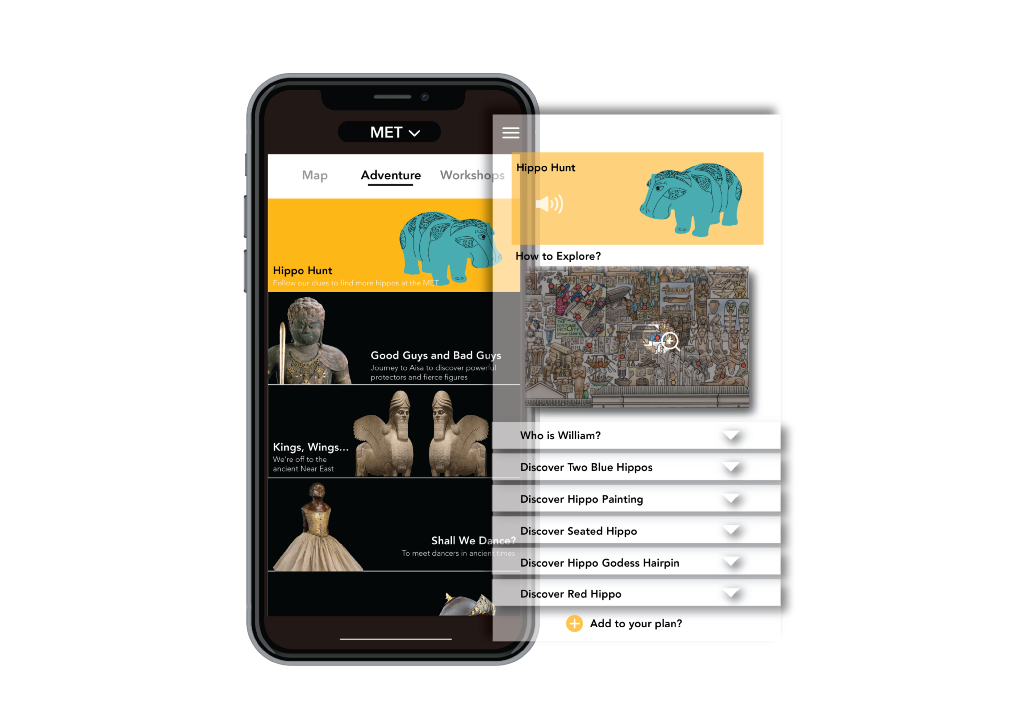

As a designer, I am always thinking about how to optimize our museum experience and make the arts more accessible to people? I am so thrilled to see some prototypes coming out these days. They really deserve the public’s attention. In December of 2018, The Met teamed up with Microsoft and Massachusetts Institute of Technology (MIT) to explore new ways to transform the museum experience using Microsoft AI. Within two days, they have generated five initial AI prototypes. Artificial intelligence can help create the experience around art that people never had before, find patterns that human life doesn’t capture, and make people learn more about themselves. It suggests that museums can be much closer to my life, more about me.

One prototype, My Life, My Met, is about helping audience better discover the meaningful connections between art and their everyday life. The concept is to substitute the images with the most proximate matching Open Access artworks from the Met collection by analyzing people’s daily posts from Instagram. Somewhere in 5,000 years of human history will resonate with you in some way, visualize the relationship with your routines and narrow down your distance with arts through AI image recognition. Probably the food or dressing users upload would be replaced by an artist’s print somewhere in art history from the Met Collection. The huge amount of data, over 400,000 images, through the Met’s Open Access Program will personalize the relationship and inspire regular explorers or experience seekers to understand more about arts.

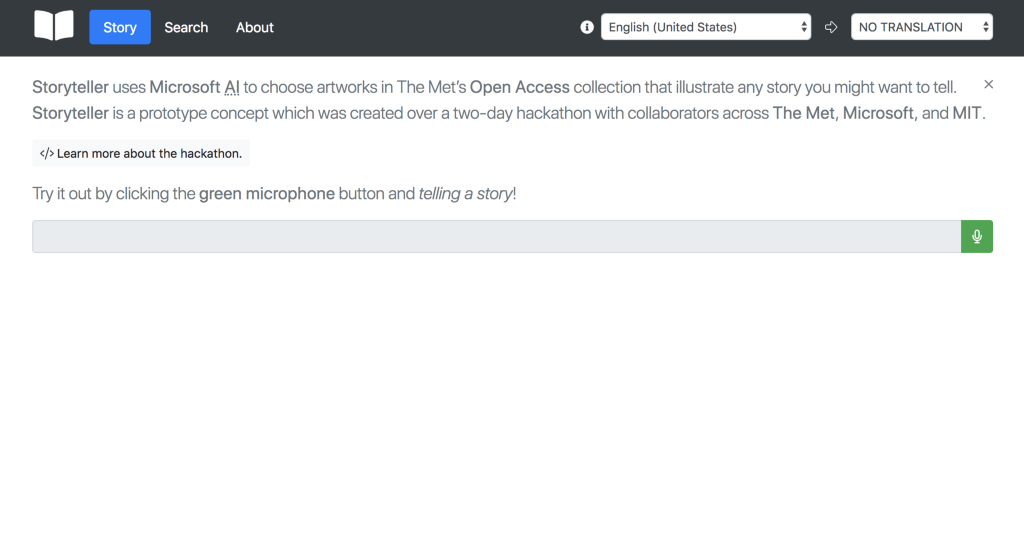

Storyteller, is another prototype to foster a new practice of bringing artifacts to life by leveraging voice recognition AI to find artworks in the Met’s collection. Some key words, sentences, conversation or discussion AI captured will trigger it to share relevant artworks accordingly. After the user finished the storytelling, they can choose to share the thread of artworks from the Met on their social media.

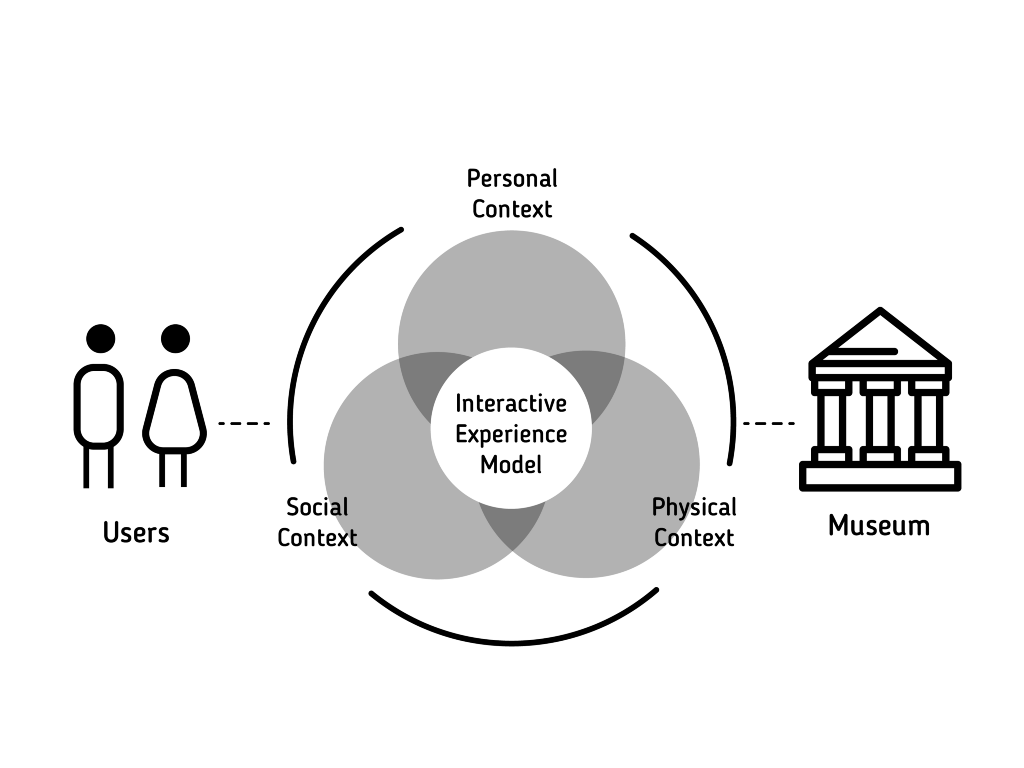

These two prototypes are showing the possibilities of how objects can stick to the audience and critical steps to attract more new and diverse visitors, and connect them from online artwork tasting to offline museum visiting experience. Online to Offline is used to describe systems enticing consumers within a digital environment to make purchases of food or services from physical businesses. Creating an interesting online artwork experience will definitely help users to create a desire to visit the real objects in the museum. So, making the objects sticky will reinforce people’s behavior of visiting museums. Museum researchers, Falk and Dierking, introduced the Interactive Experience Model, the model conceptualized the museum experience from a visitor’s perspective, involving interaction among three overlapping contexts: the personal, the social, and the physical context. The personal context includes prior knowledge, experiences, interests, motivations, and concerns. The social context relates to the specific groups who visit the museum. Physical context refers to the physical setting in the museum. This model helps to explain the visitor’s total experience and show a clear guide for designing a positive interactive museum experience. The physical objects they see in the museum can link back to the objects they accessed online. Their obtained knowledge, life experiences or stories will be expanded to their museum navigation and build a special emotional connection. The memories of what they saw, what they did, and how they felt about their experiences are the most frequently recalled and persistent aspects relate to the physical context. All of an individual’s sensory channels become engaged in the experience, and the audience will be provided a more memorable experience. Our brain is not a passive recipient of sensory inputs; we can refine by our experience. Identifying art through machine learning, AI can help users in and out of the museum explore collections in new ways and help create a more direct interpersonal engagement and shape a personalized, multi-sensory museum experience. The more data to use for machine learning, and therefore its algorithms become “smarter” and faster. Digital museums could understand more from their users, fully leverage the opportunity to test, and map the experience for each type of users in a more precise way. Imagine, each time you decide to visit a museum, AI will generate your personal plan, the objects and the exhibition are all about your life, your interests and your preferences. You can add or remove some items from the proposal. It will save you a lot of time and make you feel comfortable before you go.

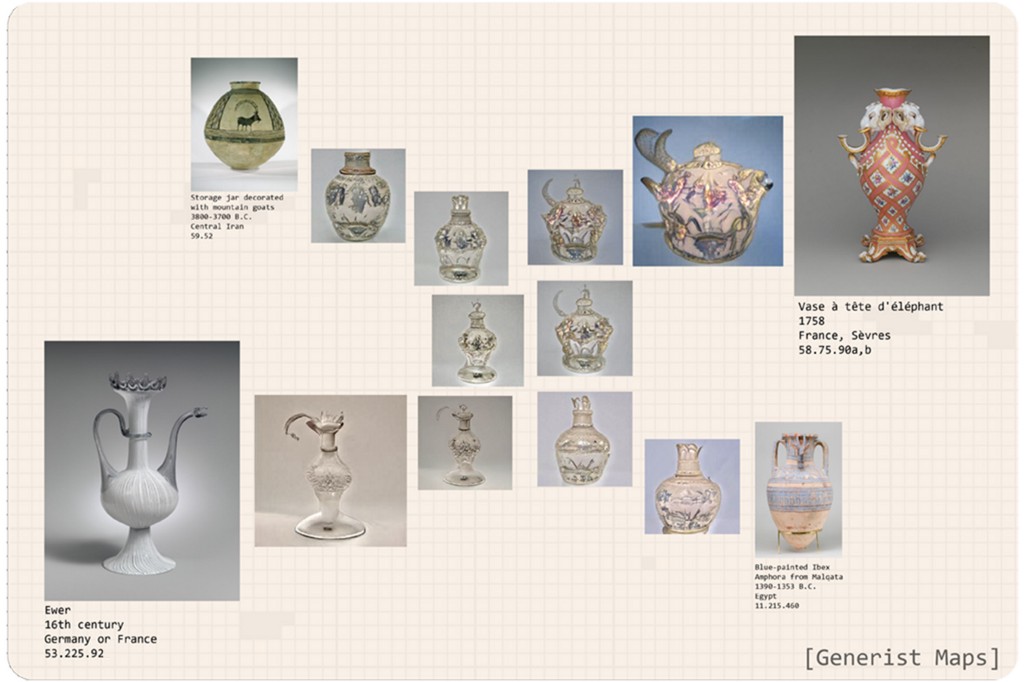

Another prototype, Generist Maps, I think, will help enhance participatory experiences. It uses the technology called generative adversarial networks (or GANs), which are a type of neural network. Once you have found the piece you want to study, you can use the tool to find its closest visual matches within The Met collection and so discover new areas of interest. The GANs can conjecture possible variations of objects by plotting out. What a GAN ends up making is a third new image that apparently fits within the category for that kind of image. Machine learning can internalize the features selected for by contemporary curation, as well as the historical evolution of collectively, iteratively produced cultural artifacts. It has the potential to help the Met’s visitors navigate and understand its collection from the point of view that is more aligned with ancient and non-Western conceptions of art and image-making. It is possible to begin visualizing the diversity and complexity of artistic creation and envision artworks that may have existed, based on our knowledge of artworks that we know, and in that way gain a fuller, more complete understanding of visual culture. There are hundreds of crafted Jars, Amor, Ewers, Goblets, Purses, Teapots, Vases in the Met. It is hard for audience to interact with those pieces, even they really want to know more about the stories, background information or functionalities. But through the recombinant image you get, Generist Maps can stimulate your imagination to generate some connection between two known things. I am thinking, maybe we can design an interactive installation or caption, or just some types of puzzle games, to maximize the value of those crafts and improve our participatory experience.

By harness artificially intelligence, museum professionals could bring more audience from online to offline, and design a more personalized and engaging museum experience. The wide range of new and diverse audience will be approached and guided by AI on a global level.

Reference:

Tracy Chevalier: Finding the story inside the painting (TedSalon London Spring 2012) https://www.ted.com/talks/tracy_chevalier_finding_the_story_inside_the_painting?language=en

Artificial Intelligence, Like a Robot, Enhances Museum Experiences By Jane L. Levere, The New York Times https://www.nytimes.com/2018/10/25/arts/artificial-intelligence-museums.html

IRIS+ Part Two: How to Embed a Museum’s Personality and Values in AI by Elizabeth Merritt, https://www.aam-us.org/2018/06/19/iris-part-two-how-to-embed-a-museums-personality-and-values-in-ai/

The Met x Microsoft x MIT: #ArtMeetsAI https://www.metmuseum.org/blogs/now-at-the-met/2019/met-microsoft-mit-art-open-data-artificial-intelligence https://www.microsoft.com/inculture/arts/met-microsoft-mit-ai-open-access-hack/

How Artificial Intelligence Can Change the Way We Explore Visual Collections https://metmuseum.org/blogs/now-at-the-met/2019/artificial-intelligence-machine-learning-art-authorship

“My Life, My Met”, initial prototype for “Sparking global connections to art through AI” by The Met, Microsoft and MIT, https://www.microsoft.com/inculture/uploads/prod/2019/02/mylife-my-met.pdf

The diagram illustrates the “topographic map” generated by Generist Maps. Image courtesy Sarah Schwettmann from the article “How Artificial Intelligence Can Change the Way We Explore Visual Collections”, https://www.metmuseum.org/blogs/now-at-the-met/2019/artificial-intelligence-machine-learning-art-authorship

Impact of Machine Learning on Improvement of User Experience in Museums.” In 2017 Artificial Intelligence and Signal Processing Conference (AISP), 195–200. https://ieeexplore.ieee.org/document/8324080

French, Ariana. 2018. “On Artificial Intelligence, Museums, and Spaghetti.” https://medium.com/@CuriousThirst/on-artificial-intelligence-museums-and-spaghetti-b107cf1b4dc9

Falk, John H, and Lynn D Dierking. Learning from Museums : Visitor Experiences and the Making of Meaning. AltaMira Press, 2000.

Online to Offline: Artificial Intelligence helps to design a personalized museum in digital era was originally published in Museums and Digital Culture – Pratt Institute on Medium, where people are continuing the conversation by highlighting and responding to this story.